The Confidence to Act must be Grounded in Rigorous Validation

Many tools claim to be validated, but few hold up to rigorous statistical validation. As a result, their claims offer false confidence. We set a higher standard for ourselves: to act with true confidence, grounded in science.

AgileBrain has been rigorously tested and validated, allowing it to profile emotions via quantitative, replicable data that can withstand statistical scrutiny. AgileBrain generates personal insight to help inform a self-development journey and can be aggregated across individuals to reveal organizational insights at any level (teams, departments, companies, etc.).

Validation

AgileBrain has been subjected to extensive reliability testing and validation.

Reliability and validity both refer to how well something measures. Reliability refers to the consistency of the measure, that is, how closely related a set of items behave as a group (i.e., are they all measuring the same thing) while validity refers to the accuracy of the measure, does it measure what we believe it measures.

Reliability

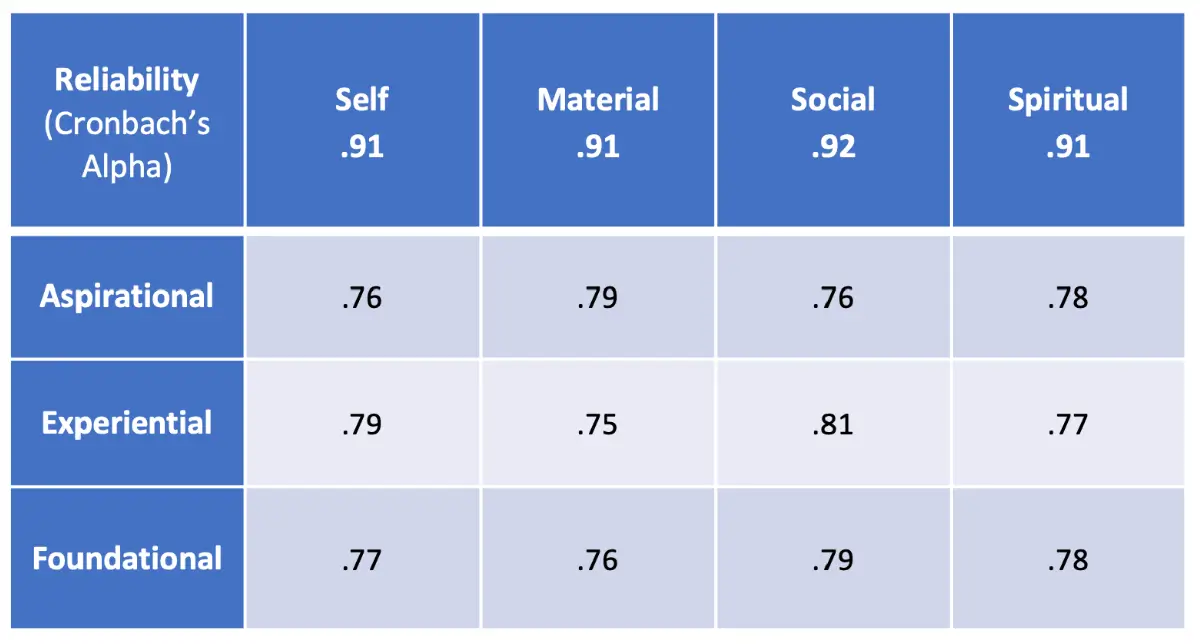

Before looking at the validity of any assessment instrument, we must assure that it is measuring the construct of interest consistently. The accepted statistical test of reliability is a Cronbach’s Alpha. Researchers say that a model “holds together” well if it achieves an Alpha of .60 and achieves a “gold standard of reliability” with an Alpha of .70 or more.

The table below displays the Alphas for the four domains and the twelve individual emotional needs cells:

AgileBrain achieves Alphas of .90 or greater (far over the .70 “gold standard”) in each of the four emotional domains and .75 or greater (again, well over the .70 “gold standard”) in each of the twelve emotional needs cells.

Validity

Beyond reliability, we need to establish evidence that the assessment measures what we believe it measures. Construct validity addresses the question: Does the measure correspond to some meaningful psychological construct? We have employed construct validation procedures to examine AgileBrain as providing an explanation for identified groups based on independent survey data.

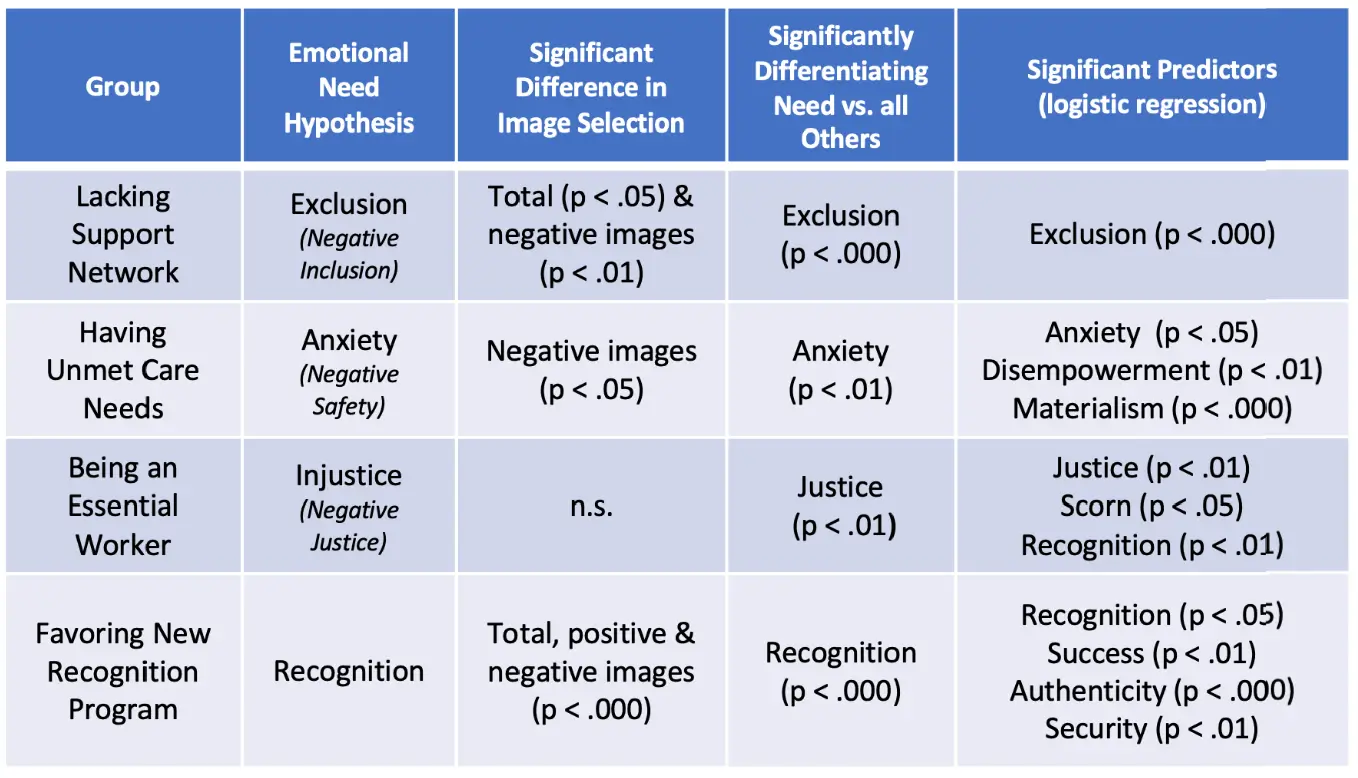

The table below shows a number of groups identified by survey data, their hypothesized emotional need and the degree to which AgileBrain differentiated between members of the group and the general population on the variable in question. The last column of the table includes AgileBrain variables other than the hypothesized variable that were also significant predictors of group membership.

AgileBrain successfully confirmed the emotional need areas hypothesized for the identified groups. For example, the first group in the table, people who self-identified as lacking a support network, scored highest on Exclusion (the negative of Inclusion) with a very high probability of significance.

Criterion-Related Validity

Criterion-related validity examines the relationship of the measure in question, here AgileBrain emotional profiles, with other outcome variables that should be related.

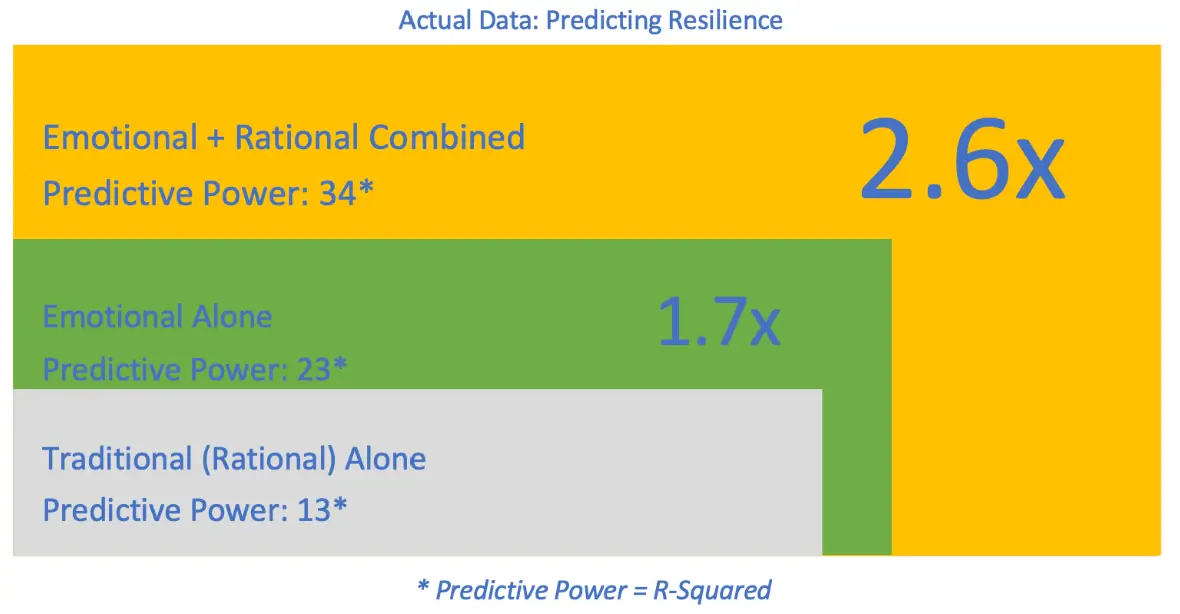

We have run multiple regression analyses using AgileBrain as a stand-alone predictor and in combination with traditional (rational) assessment techniques to evaluate its predictive power. The results have consistently shown that AgileBrain adds tremendous power in predicting a variety of important outcomes. The illustration below shows the added power in predicting an individual’s resilience (during the COVID-19 pandemic).

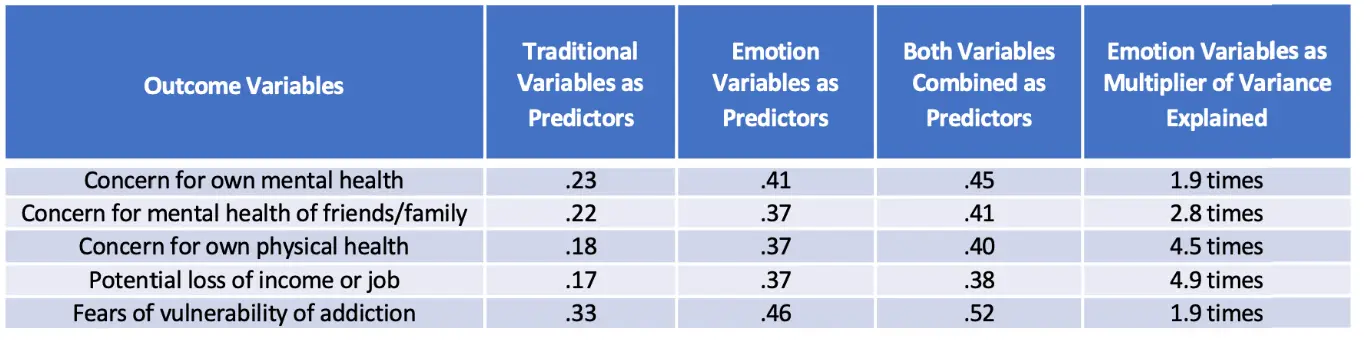

We saw similar or greater “lift” in power when adding AgileBrain to a wide range of predicted outcome variables, including those displayed in the table below: